If you follow AI video closely, one name has appeared almost out of nowhere and instantly started taking over the conversation: Happy Horse AI. What makes the story interesting is not just the sudden attention. It is the way this model has moved through the space: little background, very little public explanation, and yet strong performance in blind comparison leaderboards that creators actually watch.

That is why this is now one of the most talked-about developments in AI video. A mysterious newcomer has shown up, people are testing it, and many are asking the same question: is this just a short-lived wave of hype, or is it a real sign that the ranking of top video models is changing?

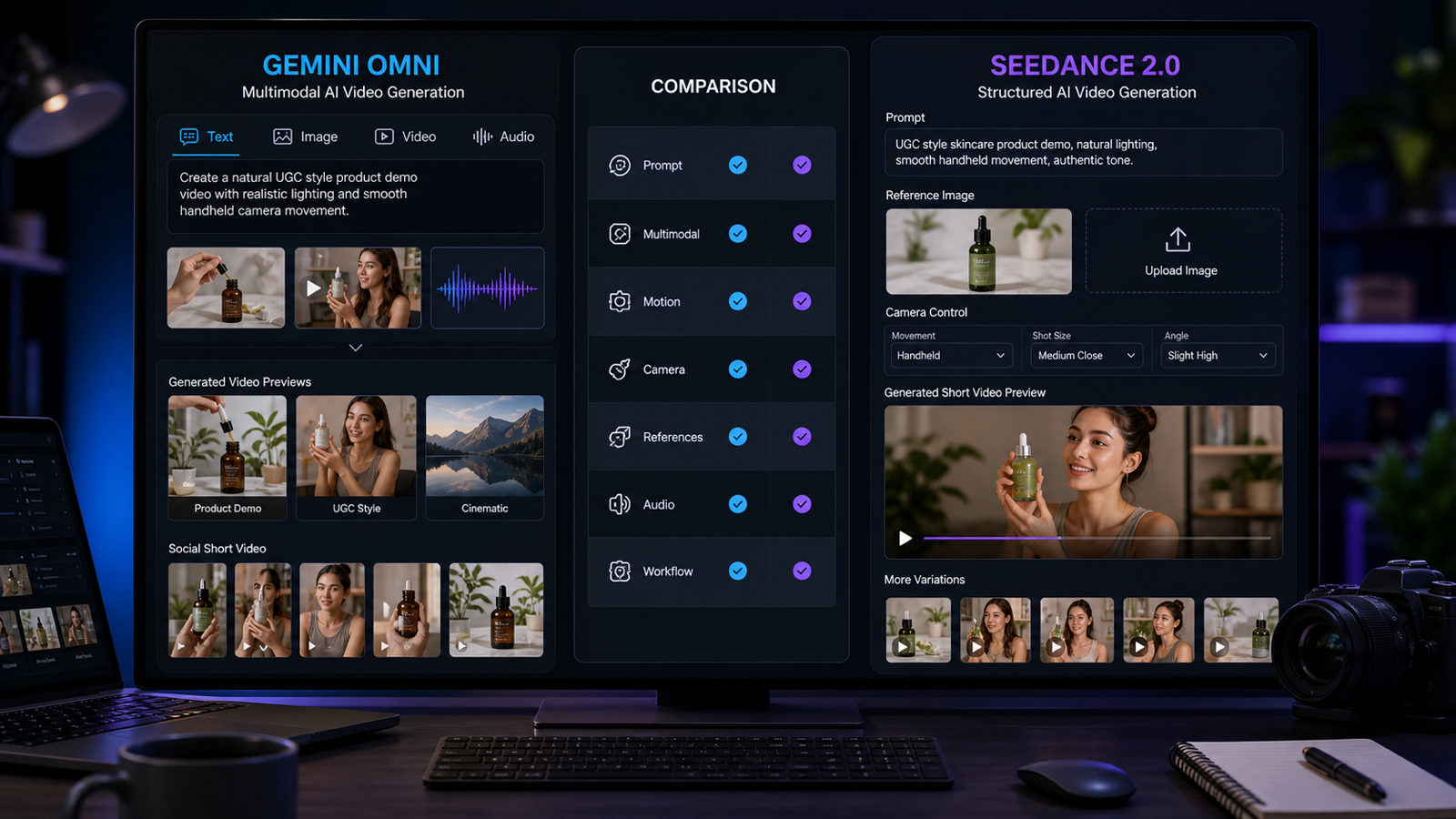

At the same time, this is also the right moment to compare it with a more established rival. Seedance 2.0 video has a much clearer public product story, stronger documentation, and a more mature reputation among users who want controllable, production-ready outputs. So while the buzz is currently around Happy Horse, the real conversation is bigger than that. It is about mystery versus clarity, surprise momentum versus structured capability, and curiosity versus reliability.

For creators, marketers, and anyone looking for a dependable AI video generator, this comparison matters because leaderboard headlines alone do not tell the full story. A model can win attention quickly, but what really matters is how it fits into real workflows.

Why Happy Horse 1.0 became headline-worthy so fast

The biggest reason Happy Horse 1.0 is making news is simple: it climbed to the top of current blind-vote video leaderboards so quickly that people had to stop and ask what it was. In a crowded field where new releases appear all the time, very few models arrive with this kind of immediate impact.

That surprise factor is doing a lot of the work. When a model comes from a major, well-known company, people already expect polish, strong demos, and a marketing rollout. But Happy Horse 1.0 feels different. Its public identity is still relatively unclear compared with other major AI video tools, and that mystery is part of why creators are paying such close attention.

The model’s public-facing pitch is also easy to understand. It presents itself as a fast, cinematic video tool that can turn text or images into polished results with 1080p output, multi-shot storytelling, and optional audio. That combination is attractive because it speaks directly to how creators think: can this tool help me make clips that look dynamic, finished, and ready to post?

In other words, the news is not only that it ranked well. The news is that it ranked well while still feeling new, partly unexplained, and highly testable. That is a powerful combination in the current AI video market.

What is actually confirmed about Happy Horse right now

This is where the story becomes more interesting—and more useful. The smart way to look at Happy Horse 1.0 is to separate confirmed public information from speculation.

What seems clear right now is that the model has earned serious attention in current benchmark discussions and that its official-facing experience is built around accessibility. The pitch is straightforward: type a prompt or upload an image, generate a cinematic clip, and do it with as little friction as possible.

That matters because many users do not care first about architecture diagrams or technical naming. They care about what a tool lets them do in a browser. On that level, Happy Horse 1.0 is being discussed as a model that feels easy to approach while still aiming for polished motion, smoother scene transitions, and stronger visual drama than many quick-generation tools.

At the same time, the model’s rise has also triggered more questions than answers. Who is behind it? How stable are the results across different prompt types? Will it keep its current momentum as more users test it in more categories? Those questions are part of the story too, and they are exactly why the model feels newsworthy rather than merely trendy.

Why Seedance 2.0 is still the clearest model to compare against it

If Happy Horse 1.0 is the breakout mystery, Seedance 2.0 AI is the more fully explained alternative.

That gives it a very different kind of strength. Instead of relying on sudden buzz, Seedance 2.0 AI benefits from a clearer product identity. It is positioned as a multimodal video creation system with support for text, image, audio, and video references. That makes it easier to understand not just as a model that generates nice clips, but as a model designed for creators who want more direction, more control, and more consistency from one project to the next.

This difference is important. A lot of casual users judge a model by a few viral examples, but serious creators usually look at workflow depth. Can the model follow references well? Can it help preserve character or scene consistency? Can it do more than produce a single good-looking shot?

That is why Seedance 2.0 video remains such a strong comparison point. Even if the current wave of conversation leans toward the surprise of Happy Horse, Seedance still appeals to users who want something that feels easier to place inside a broader production pipeline.

Some users also first encountered the model through public demos and platform integrations tied to Higgsfield Seedance 2.0, which helped reinforce its reputation as more than just a leaderboard name. It feels like a tool with a clearer place in the market.

Chart: Current quality snapshot

Here is the simplest way to understand the conversation right now.

| Category | Happy Horse 1.0 | Seedance 2.0 | What it suggests |

|---|---|---|---|

| Text to video with audio | 1229 | 1225 | Essentially neck and neck |

| Text to video without audio | 1383 | 1273 | Happy Horse has the clearer lead |

| Image to video with audio | 1165 | 1164 | Almost a tie |

| Image to video without audio | 1413 | 1357 | Happy Horse leads again |

These numbers explain why Happy Horse 1.0 suddenly became part of so many AI video conversations. In blind comparisons, it is not just competitive. In several categories, it is ahead. But the table also shows something else: the audio categories are much closer. That means the gap is not a simple sweep in every practical use case.

Chart: Workflow comparison for real creators

Leaderboard wins are exciting, but users usually need more than a scoreboard. They need to know which model fits their actual process.

| Comparison point | Happy Horse 1.0 | Seedance 2.0 |

|---|---|---|

| First impression | The surprise breakout model | The more clearly positioned pro workflow option |

| Public product story | Simple, cinematic, easy to test | More structured and feature-rich |

| Input style | Text and image focused in public-facing use | Text, image, audio, and video references |

| Audio angle | Attractive but still part of a developing public story | More clearly integrated into the model identity |

| Best fit | Fast experiments, trend testing, discovery | Directed creation, reference-heavy workflows |

| Buyer mindset | “I want to see why everyone is talking about this” | “I want a model I can build a repeatable process around” |

This is where Seedance 2.0 AI continues to make a strong case. Even when another model is winning the buzz cycle, a clearer workflow can still be the deciding factor for professionals.

So which model feels more useful right now?

The honest answer is that they are useful in different ways.

If you are the kind of creator who wants to test the newest thing everyone is discussing, Happy Horse 1.0 is the obvious curiosity pick. It has momentum, a strong visual pitch, and the kind of current leaderboard profile that makes people want to try it for themselves.

If you are the kind of user who cares about repeatability, richer input control, and a more structured creative system, Seedance 2.0 video may still feel like the safer and smarter choice.

That does not make one “the winner” in every sense. It simply means the story is more nuanced than a single ranking. Happy Horse 1.0 is the model driving the latest conversation, while Seedance 2.0 AI is still one of the strongest answers for users who want depth as well as quality.

The bigger takeaway for creators

The most important lesson here is that AI video is moving into a phase where surprise challengers can change the conversation almost overnight. A new model does not need a long runway to matter anymore. If it performs well, creators will notice immediately.

But attention and long-term value are not always the same thing. Today’s headline story is Happy Horse 1.0, and that is fair. Its sudden rise makes it one of the most interesting AI video developments right now. Still, the comparison with Seedance 2.0 video reminds us that public clarity, multimodal control, and workflow stability still matter just as much as hype.

For most readers, the best approach is not to treat this as a fandom battle. Treat it as a practical question: do you want to explore the breakout model of the moment, or do you want a more clearly defined video system with stronger production logic?

That is why this comparison matters. It helps turn AI video news into a real decision.

Recommend VideoWeb’s Models and Tools

- Explore VideoWeb AI for a broader all-in-one creation hub.

- Try the Image to Video tool for quick visual animation and trend testing.

- Use Text to Video when you want prompt-first video generation.

- Check the dedicated Seedance 2.0 video page for a more reference-driven workflow.

Related Article

- Seedance 2.0 Video Generation Guide: Tutorial + Prompts

- How to Use Seedance 2.0 for Anime Clips: Prompt Examples and Scene Ideas

- Sora 2 Is Shutting Down: The Best AI Video Alternatives for Creators Right Now