The Wan model family has evolved at an astonishing pace. Just a short time ago, Wan 2.5 was regarded as one of the most capable open or semi-open video generators available—stable, versatile, and friendly enough for creators who needed fast, reliable outputs. But with the arrival of Wan 2.6, creators everywhere are asking whether the upgrade is truly transformative or just another incremental refresh.

Spoiler: Wan 2.6 is a much bigger leap than many expected.

The new wan 2.6 ai video generator doesn’t simply refine visuals; it expands the model’s entire purpose. Motion stability is smoother. The wan 2.6 text to video and wan 2.6 image to video pipelines behave more intelligently. And the most talked-about addition—the wan 2.6 ai video generator with audio—finally brings native lip-sync and speech alignment into the Wan ecosystem.

If you've been wondering whether to switch, or whether Wan 2.6 is truly “better” than Wan 2.5 in meaningful ways, this full breakdown clarifies exactly what has changed—and why those changes matter.

Wan 2.5: A Strong Baseline That Needed a Push

Before appreciating Wan 2.6, it’s helpful to understand what Wan 2.5 brought to the table.

For many creators, 2.5 was the workhorse: quick rendering, decent realism, and cleaner motion compared to earlier versions. It handled casual clips, product videos, stylized content, and simple talking segments with competence. But as demand for higher realism grew, certain limitations became clear.

Wan 2.5 struggled most with:

- identity drift in portrait and character clips

- inconsistent details between frames

- limited facial animation and rudimentary mouth movement

- jittery motion in complex scenes

- erratic lighting behavior in dynamic environments

- limited ability to interpret multi-step prompts

- no true audio-visual sync, forcing users into heavy post-processing

The model remained popular because it was reliable and easy to use—but everyone knew it was approaching its ceiling.

Wan 2.6 changes that ceiling dramatically.

Wan 2.6: What’s Actually New?

The jump from Wan 2.5 to wan 2.6 feels like a shift in philosophy: from “good enough for everyday use” to “powerful enough for professional-quality clips.” The core improvements fall into four major categories: visual coherence, prompt intelligence, identity retention, and audiovisual alignment.

1. Better Visual Coherence and Motion Stability

Across early tests, the wan 2.6 video generator shows smoother movement and significantly less jitter. Lighting transitions are more natural, shadows behave consistently, and backgrounds no longer flicker during camera motion.

These improvements solve a key frustration in Wan 2.5: even when scenes looked nice, they sometimes felt “AI-generated.” Wan 2.6 reduces that uncanny feeling and gives videos a more intentional aesthetic.

This stability also applies to longer clips. Where Wan 2.5 began to break down after around 5–7 seconds, many Wan 2.6 clips maintain coherence throughout full sequences.

2. Stronger Prompt Interpretation (Text-to-Video)

One of the biggest surprises is how much the wan 2.6 text to video engine has improved. Wan 2.6 now understands more complex prompts including:

- multi-character interactions

- camera instructions

- emotional cues

- timing sequences

- layered environments

- transitions between actions

This makes it easier to produce short narratives rather than “single-scene” clips. For creators who write detailed prompts, Wan 2.6 simply feels smarter.

By contrast, Wan 2.5 often gave literal but shallow interpretations—functional, but not expressive.

3. More Accurate Identity Retention (Image-to-Video)

This is one of the most immediately noticeable upgrades. The wan 2.6 image to video workflow is dramatically better at keeping characters consistent throughout motion. Faces no longer distort on angled turns, hairstyles stay stable, and proportions remain natural.

This is crucial for:

- avatar creators

- influencers

- portrait-based content

- animators

- product-to-video creators

- cosplay transformations

Wan 2.5 sometimes produced beautiful stills but struggled with maintaining a consistent identity as characters moved. Wan 2.6 finally closes that gap.

4. Audio and Lip-Sync: The Breakthrough Feature

The addition of the wan 2.6 ai video generator with audio is a game changer.

Wan 2.5 had no native audio-video alignment. Talking characters, narrators, or spokesperson videos typically required tedious manual syncing. Wan 2.6 introduces:

- phoneme-aware lip shapes

- emotional micro-expressions

- aligned jaw movement

- natural blinking and head motion

- pacing that matches voice rhythm

Suddenly, Wan becomes viable for talking-head content, AI presenters, teaching videos, corporate messaging, and any scenario where a character must speak convincingly.

This single feature alone justifies the upgrade for many creators.

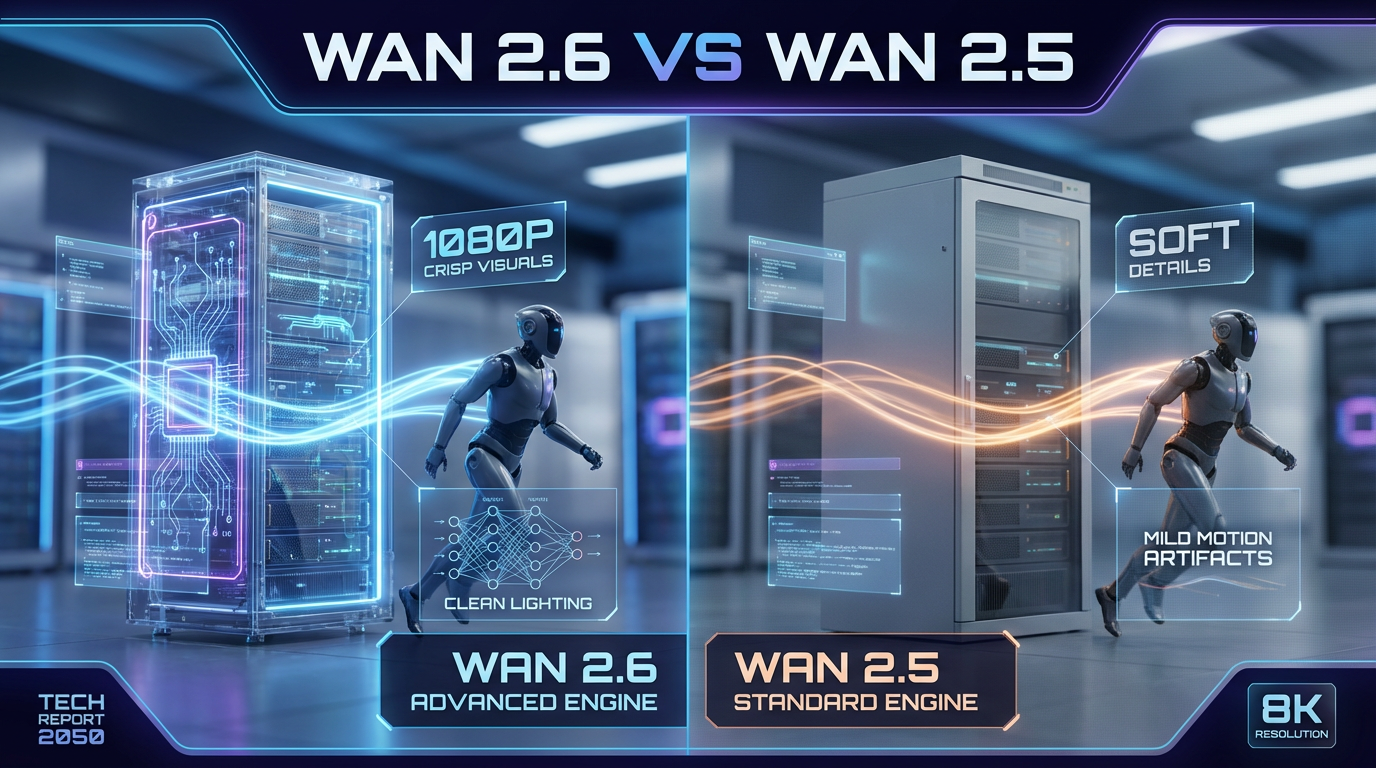

Side-by-Side Breakdown: Wan 2.6 vs Wan 2.5

Below is a structured comparison of key features.

Comparison Chart: Wan 2.6 vs Wan 2.5

| Feature Category | Wan 2.5 (Baseline) | Wan 2.6 (New Release) |

|---|---|---|

| Visual Coherence | Good but inconsistent in complex scenes | Significantly smoother, stable across long shots |

| Motion Stability | Occasional jitter, artifacting | Clean movement, improved temporal consistency |

| Text-to-Video Interpretation | Literal, limited multi-step logic | More intelligent, handles complex scripted prompts |

| Image-to-Video Identity | Face drift common | Strong identity retention, accurate facial structure |

| Lighting & Shadows | Unpredictable under dynamic motion | More realistic, smoother transitions |

| Audio Sync | No native support | Full lip-sync, phoneme matching, emotional expressions |

| Character Animation | Limited expression range | More expressive, lifelike movements |

| Render Reliability | Occasional misfires | More consistent output per prompt |

| Best Use Cases | Simple clips, stylized videos | Talking videos, portraits, ads, storytelling |

This overview makes the jump extremely clear: Wan 2.6 is not a minor upgrade. It restructures what the model can do.

Text-to-Video: Precision vs Interpretation

One of the clearest advantages of Wan 2.6 is how it processes and visualizes prompts. Creators rely on text-to-video tools to handle increasingly complex instructions, and the improved wan 2.6 text to video behavior demonstrates a deeper semantic understanding.

Where Wan 2.5 sometimes glossed over secondary details, Wan 2.6 incorporates:

- environmental cues

- object relationships

- sequence logic

- camera direction

- emotional tone

This means fewer retries and less prompt engineering—an immediate productivity boost.

Image-to-Video: Stability That Matters

The wan 2.6 image to video system is arguably the most improved area for creators who rely on character-driven content. Brand ambassadors, VTubers, cosplayers, and digital influencers all require videos where identity consistency is non-negotiable.

Wan 2.6 handles:

- profile views

- expressive movement

- dynamic lighting

- clothing consistency

with far fewer errors. The difference is visible even in casual tests.

Audio Sync and Talking Characters: A New Advantage

Nothing in Wan 2.5 prepared creators for how well Wan 2.6 handles talking-head videos. The addition of the wan 2.6 ai video generator with audio turns Wan from a purely visual engine into a more complete storytelling tool.

Users can now generate:

- spokesperson videos

- animated presenters

- explainer content

- educational modules

- product narrations

- character dialogues

without relying on external animation tools for lip-sync.

For many businesses, this replaces multiple steps in their production pipeline.

Workflow Differences: What It’s Like to Use Wan 2.6

Wan 2.6 doesn’t just create better videos—it creates them with less effort.

Prompting Is Easier

You don’t need ultra-complicated prompts to get good results. Wan 2.5 often required repeated refinement; Wan 2.6 is clearer and more responsive.

Less Post-Editing

Because faces stay consistent and lip-sync is native, the need for stabilization tools or audio-matching software significantly decreases.

Faster Content Turnaround

The wan 2.6 ai video generator maintains Wan’s reputation for solid generation speed while improving reliability.

For creators who produce content daily, this translates to a major efficiency jump.

Real-World Scenarios Where Wan 2.6 Is a Clear Winner

1. Talking-Head and Presenter Videos

The audio-sync upgrade transforms business and educational content creation.

2. Influencer Shorts and Reels

Wan 2.6 produces smoother, more stylish motion—ideal for fast-paced social content.

3. Brand and Product Videos

Improved prompt interpretation results in more polished, on-brand videos.

4. Portrait, Avatar, and Character-Based Clips

Identity retention is dramatically better than Wan 2.5, making character continuity easy.

5. AI Storytelling and Explainer Series

With more stable text-to-video sequencing, creators can build consistent multi-scene narratives.

Where Wan 2.5 Still Has a Role

Even though Wan 2.6 is superior in most areas, Wan 2.5 still has value, especially when:

- audio isn’t required

- videos are simple and short

- you need extremely fast render

Try wan 2.6 ai video generator now!