The Dawn of Hailuo 2.3 — A New Era in AI Video Generation

Artificial intelligence has already transformed text, art, and sound — and now it’s rewriting the language of cinema. Enter Hailuo 2.3 AI video generator, the latest and most ambitious version of Hailuo’s creative engine. It blends narrative precision with dynamic visual control, standing as one of the most complete Hailou AI 2.3 systems to date.

While its predecessor pushed the boundaries of realism, Hailuo 2.3 builds upon that legacy with greater depth, adaptability, and cinematic intelligence. It’s not just a tool for creating short clips — it’s an ecosystem that empowers artists to think like directors.

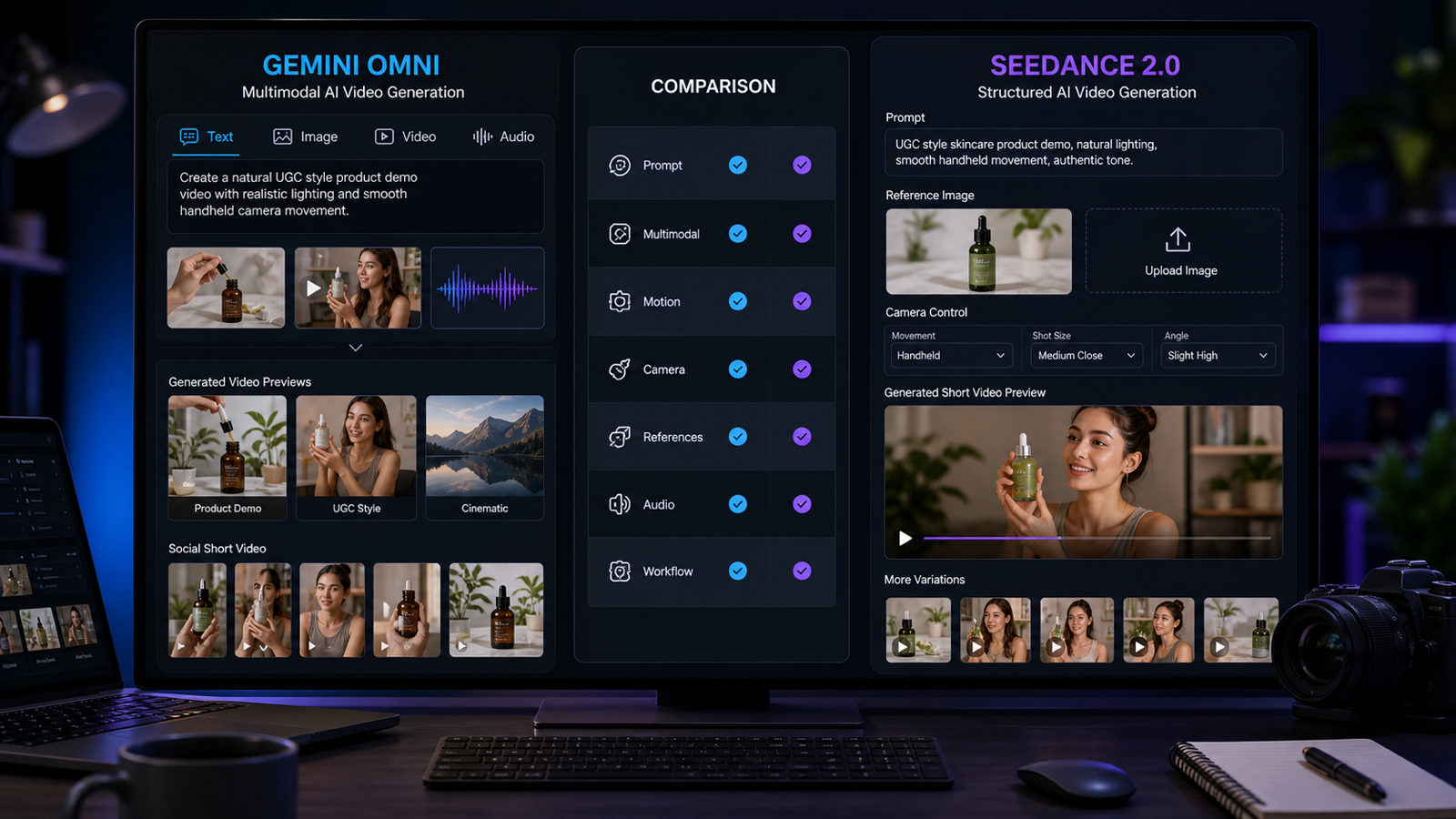

In this article, we’ll explore what makes Hailuo 2.3 exceptional, and how it compares with other major contenders in the field — including VIDU 2.0, Veo 3.1 Video, Kling 2.5, Wan 2.5, Seedance 1.0, and Sora 2 AI.

The goal isn’t just to crown a winner — it’s to understand how each model contributes to the evolving frontier of machine-generated video, and why Hailuo 2.3 might represent the next creative leap.

What Makes Hailuo 2.3 a Landmark Innovation

The Hailuo 2.3 AI video platform is defined by three words: realism, rhythm, and responsiveness. It doesn’t simply generate frames; it orchestrates them.

At its heart lies a hybrid engine combining diffusion-based video synthesis with temporal memory mapping. This allows for dynamic continuity across frames — one of the most difficult problems in AI cinematography. Instead of producing disconnected moments, Hailuo 2.3 understands scene flow, emotional tone, and camera logic.

1. Smarter Motion Fidelity

Hailuo 2.3 achieves remarkable consistency in body physics, lighting reflection, and spatial awareness. A dancer’s shadow moves with their rhythm, waves crash naturally against a shoreline, and camera pans remain smooth even during fast-paced action.

2. Enhanced Image-to-Video Pipeline

One of its standout features is the Hailuo 2.3 AI image to video workflow. Users can upload any static image — a digital painting, portrait, or product photo — and watch it transform into a cinematic motion clip. The system builds a 3D depth map from 2D data, creating lifelike parallax and lens behavior.

This dual-input approach (text-to-video and image-to-video) makes Hailuo 2.3 far more versatile than most models in its class.

3. Cinematic Control

Through prompt design, users can direct camera movement, emotion, tone, and rhythm. Hailuo 2.3 understands modifiers like “tracking shot,” “close-up,” “handheld,” or “slow cinematic dolly.” Combined with improved lighting logic, the results feel authored rather than automated.

4. Broader Style Adaptability

Whether you’re rendering anime, surrealist dreamscapes, or corporate promo videos, Hailuo adapts seamlessly. It maintains stylistic integrity without blurring character edges or destabilizing motion — a challenge that many competitors still struggle with.

The Competitive Landscape of AI Video Models

While Hailuo 2.3 stands tall, it operates within a crowded and fast-moving ecosystem of advanced AI video models. Let’s explore the peers shaping the industry and how they differ.

VIDU 2.0

VIDU 2.0 is known for its exceptional text-to-video efficiency. It generates short cinematic clips in seconds, emphasizing speed over fine detail. Its streamlined architecture appeals to marketers and social content creators who prioritize turnaround time. However, its motion stability and lighting realism often remain one step behind Hailuo’s more refined physics engine.

Veo 3.1 Video

Developed by Google DeepMind, Veo 3.1 delivers striking realism and depth. It shines in compositional control and storytelling logic, but lacks the image-to-video flexibility found in Hailuo 2.3 AI video generator. Veo’s clips often prioritize cinematic structure — yet they remain heavily text-dependent.

Kling 2.5

Tencent’s Kling 2.5 is built for performance. It’s one of the fastest AI renderers, ideal for mass content production. It supports 4K output and strong character animation, but at times sacrifices narrative flow and emotion — areas where Hailuo excels.

Kling’s strength lies in consistency for long scenes, whereas Hailuo 2.3 shines in emotional precision and lighting artistry.

Wan 2.5

Wan 2.5, developed by Baidu, combines powerful scene generation with a robust AI editing layer. It supports long sequences, object tracking, and realistic background synthesis. While Wan’s technical capabilities are vast, its interface and creative controls are more developer-oriented. Hailou AI 2.3, by contrast, is designed for artists — intuitive, fluid, and creative first.

Seedance 1.0

A relative newcomer, Seedance 1.0 specializes in human motion analysis and dance visualization. It uses biomechanical modeling to reproduce real-world movement from text or reference footage. It’s excellent for choreographed or stylized animation — though it lacks Hailuo’s cinematic scope and environmental realism.

Sora 2 AI

OpenAI’s Sora 2 AI is perhaps Hailuo’s most well-known rival. Sora’s strength is extreme realism — capable of producing ultra-high-resolution, photorealistic clips that border on live-action footage. Yet, this power comes with computational complexity. Sora excels in realism but offers limited fine-grained artistic control compared to Hailuo’s prompt-driven camera dynamics.

Hailuo 2.3 vs. The Field — Comparative Breakdown

| Category | Hailuo 2.3 AI Video Generator | Veo 3.1 Video | Kling 2.5 | Wan 2.5 | VIDU 2.0 | Seedance 1.0 | Sora 2 AI |

|---|---|---|---|---|---|---|---|

| Input Modes | Text + Image | Text only | Text + Ref Video | Text + Image | Text only | Text + Motion | Text only |

| Output Realism | Cinematic, emotional | Naturalistic, narrative | Realistic, fast | Technical, industrial | Moderate | Stylized motion | Ultra-realistic |

| Creative Control | High (camera, light, pacing) | Medium | Low | Developer-driven | Low | Limited | Moderate |

| Speed | Medium-Fast | Medium | Fastest | Medium | Very fast | Medium | Slow (high compute) |

| Strength | Cinematic depth + style fusion | Realistic storytelling | Efficiency | Long-form scenes | Speed | Human dance simulation | Photorealism |

| Limitation | Shorter duration | Less artistic control | Basic motion physics | Complex UI | Lower fidelity | Niche use | High resource demand |

The table highlights a simple truth: Hailuo 2.3 doesn’t aim to be the fastest or most photorealistic — it aims to be the most creative. It’s the model that gives directors, designers, and digital artists a sense of authorship.

Use-Case Scenarios — When to Choose Hailuo 2.3

1. For Storytellers

Writers and filmmakers who want to visualize scripts or trailers will find Hailuo 2.3 intuitive. Type “a hero walking across a desert at dawn, camera tracking from behind” — and you’ll get coherent composition, color, and pacing.

Other models like VIDU 2.0 or Kling 2.5 might deliver the scene faster, but few can match Hailuo’s sense of cinematic presence.

2. For Visual Artists and Designers

The Hailuo 2.3 AI image to video function is ideal for transforming digital artwork into living motion. Illustrators can upload a static landscape or character portrait and produce fluid movement that retains painterly textures — something that Veo 3.1 Video still struggles to maintain.

3. For Advertisers and Brands

Product designers can turn concept sketches into elegant motion prototypes. A luxury watch, for instance, can rotate under studio lighting with reflections rendered in physically accurate detail. Compared with Kling 2.5 or Wan 2.5, Hailuo 2.3 trades brute speed for visual sophistication.

4. For Motion Experiments and Short-Form Content

Platforms like TikTok and Instagram reward eye-catching motion. Seedance 1.0 might specialize in choreographic precision, but Hailuo 2.3 gives creators the tools to blend choreography with story — a dancer in an environment that moves with emotion, not just movement.

5. For Concept Development and Pre-Visualization

Directors can use Hailuo to create moodboards, camera tests, or previs sequences that inform larger projects. Its ability to simulate different lighting conditions, weather, and movement paths makes it an essential creative pre-tool before real-world production.

Strengths, Weaknesses, and Creator Insights

Strengths

- Dual-Input Workflow: Text-to-video + image-to-video capabilities.

- Cinematic Precision: Intelligent understanding of light, camera angles, and emotion.

- Stable Motion: Natural body physics and camera flow.

- Style Adaptability: From hyper-realism to painterly abstraction.

- Creative Freedom: Supports detailed prompts with real-time scene logic.

Weaknesses

- Rendering Time: Complex scenes take longer than lighter models like VIDU 2.0.

- Clip Duration: Typically limited to around 10–12 seconds per generation.

- Computational Demand: Higher fidelity requires more GPU cycles.

- Learning Curve: The richness of prompt control may feel overwhelming to beginners.

Pro-Tips for Creators

- Write prompts with scene logic (camera type, emotion, lighting, action).

- For image-to-video, use high-contrast source images for better depth extraction.

- Use emotional tone keywords (melancholic, energetic, intimate) to shape atmosphere.

- Blend text and image input for maximum creative control.

The Future of AI Video Creation

Hailuo 2.3’s launch signals a broader trend: video AI models are no longer competing just on realism — they’re competing on authorship.

Next-generation systems will merge script-to-scene, sound integration, and real-time editing, allowing creators to produce full short films interactively. Imagine a workflow where you describe a scene, generate visuals through Hailuo 2.3 AI video generator, add background sound via AI audio models, and edit everything within one unified environment.

Models like Sora 2 AI and Veo 3.1 Video are also heading in that direction, but Hailuo’s dual-input approach could make it the creative center of this ecosystem — capable of interpreting both imagination and imagery.

As computation scales and datasets grow, we may see:

- Real-time rendering of full 30-second cinematic scenes.

- AI-directed continuity editing (cutting between camera angles).

- Hybrid collaboration where humans write scripts and AIs visualize in parallel.

The line between idea and film will blur even further.

Conclusion — The Cinematic Frontier of Hailuo 2.3

At its core, Hailou AI 2.3 isn’t competing against machines — it’s collaborating with imagination. Where models like Kling 2.5 chase speed and Sora 2 AI aims for hyperrealism, Hailuo 2.3 embraces artistry, giving creators control over light, tone, and storytelling rhythm.

It’s more than a generator; it’s a visual language. A tool for digital cinematographers, illustrators, marketers, and dreamers — all unified by one goal: to turn thought into motion.

The age of AI video has officially entered its cinematic phase, and Hailuo 2.3 AI video stands at its center — not as a machine, but as a creative partner.

Whether you’re crafting a filmic vision, animating a still, or exploring new artistic workflows, Hailuo 2.3 proves one thing: the story of tomorrow’s cinema will be written not in scripts or cameras, but in prompts — and the lens through which imagination moves will be powered by AI.