If you’ve ever tried to generate a talking character video and felt disappointed, you’re not alone.

A lot of “AI talking head” clips look okay for the first second… then the cracks show: the mouth timing drifts, the eyes feel lifeless, the hands start doing strange things, and the background looks like it belongs to a different universe.

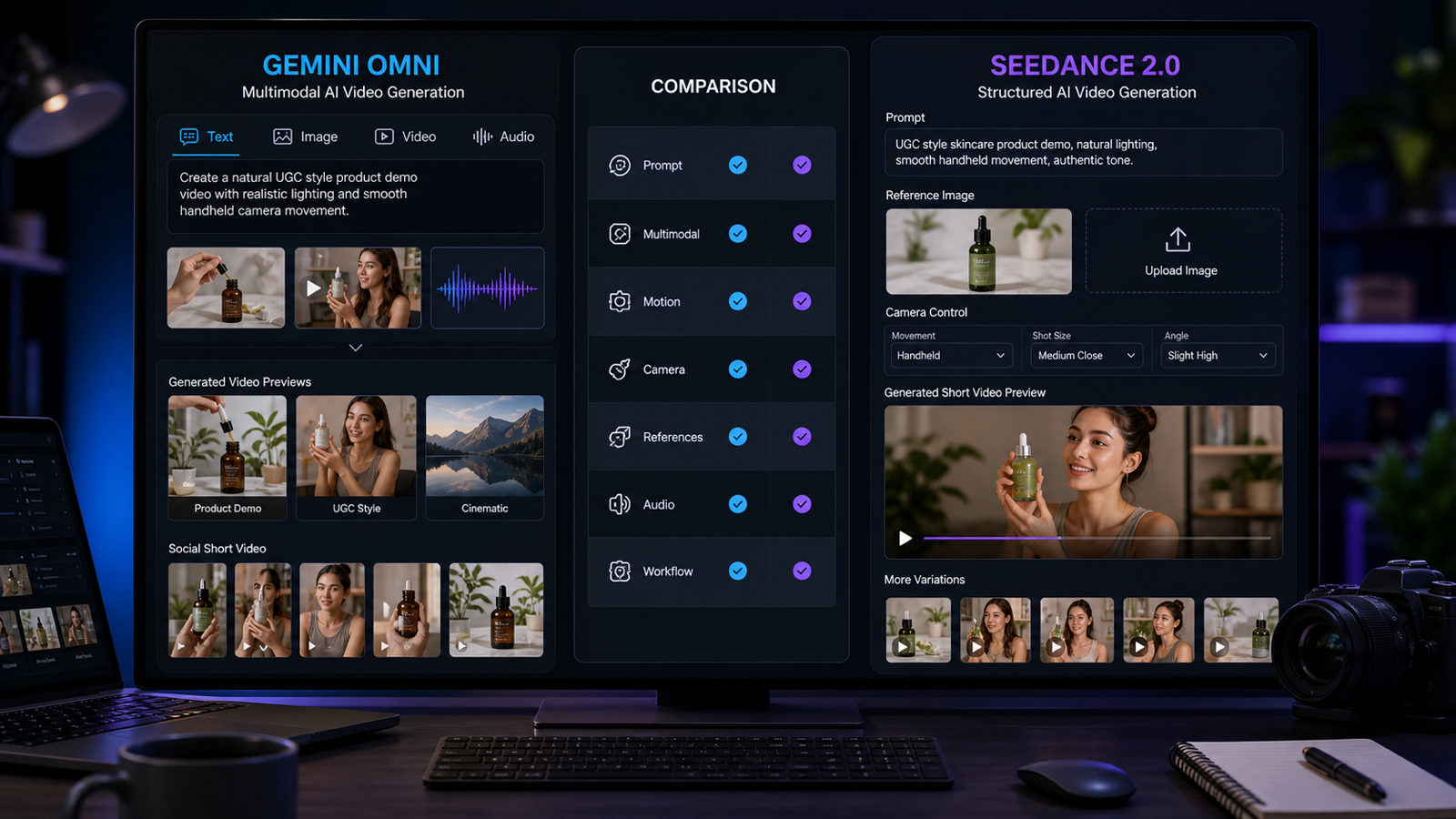

That’s why Hedra Omnia AI is getting attention. It’s part of a newer push toward performance-first video—where the model isn’t just moving lips, but trying to make the whole shot feel like a directed scene: expressions, subtle body motion, camera language, and atmosphere.

In this news-style guide, I’ll break down what Omnia is, what it’s best at, and how you can get strong results quickly with ready-to-use prompts and practical tips. Then I’ll show you how to run the same image + prompt + audio workflow using Hedra Character 3 on VideoWeb AI.

What is Hedra Omnia AI?

At a simple level, Hedra Omnia is designed for character-driven short videos built from:

- A reference image (your character)

- Audio (usually a voiceover)

- A prompt (your direction for camera + motion + environment)

The key difference versus basic lip-sync tools is intent. Omnia is aiming for a clip that feels like a performance—not just a moving face.

When it works well, you’ll notice:

- More natural micro-expressions

- Subtle breathing and posture shifts

- Gestures that match the audio rhythm

- Camera moves that feel deliberate (instead of random warping)

In other words: the character doesn’t just talk—they act.

Why it matters right now (the “blog news” angle)

We’re at a turning point where viewers don’t get impressed by “AI avatar talking” anymore. That novelty is over.

What people do notice now is whether the character feels believable:

- Does the timing match the voice?

- Do expressions change naturally?

- Does the camera feel intentional?

- Does the background feel attached to the shot?

That’s the shift Hedra Omnia represents—moving from “lip sync” toward “directable performance.”

And if you want to try this workflow today without overthinking it, the VideoWeb Hedra Character 3 model page gives you the same practical pipeline: upload image → add prompt → upload audio → generate.

What Omnia is best at (and what you should make first)

If you’re new to this style of character video, start with formats that naturally forgive small imperfections—and still look great when the acting is strong.

1) UGC-style clips (creator-to-camera)

These are perfect for quick product pitches, app demos, “I tried this so you don’t have to” videos, and creator-style reactions.

2) Explainers and tutorials

A calm, helpful delivery with light gestures often produces the most consistent results.

3) Podcast/interview snippets

Simple framing + minimal motion gives you a polished, believable performance.

4) Short cinematic monologues

If you describe lighting and camera movement clearly, you can get surprisingly film-like moments.

If you want to generate these formats quickly, Hedra Character 3 video generation on VideoWeb is a straightforward place to test your first clips.

The 60-second setup checklist (before you hit Generate)

You only need three ingredients—but each one matters more than people expect.

1) Reference image (your character)

Use a clean, well-lit image with:

- A clear face

- Minimal blur

- A consistent style (don’t mix realism + anime in the same workflow)

2) Audio (voiceover)

Keep it clean and short:

- MP3 is ideal

- Aim for 5–10 seconds when you’re iterating

- Avoid loud background noise

3) Prompt (your director’s note)

Don’t write a poem. Write a shot plan.

To test this pipeline in the simplest way, you can use the Hedra Character 3 image+audio workflow and paste one of the prompts below.

A prompting mindset that’s actually viewer-first

Most people get worse results because they prompt like they’re describing a fantasy scene.

Instead, prompt like a director:

- Scene: Where are we? What’s the mood?

- Shot: What framing? What single camera move?

- Performance: What expression and gesture style?

- Constraints: What should the model avoid?

Here’s a simple template you can reuse:

Prompt template

- Scene: “bright modern apartment, clean background”

- Shot: “medium close-up, gentle slow push-in”

- Performance: “subtle gestures near chest level, natural micro-expressions”

- Constraints: “keep face sharp, hands stable, no sudden camera jumps”

That structure alone will improve consistency.

Ready-to-use Hedra Omnia prompts (copy/paste)

Below are prompts that work well because they’re specific, camera-light, and performance-focused. Replace any bracketed details if you want.

Tip: For your first attempts, keep the prompt short and keep the audio short. Once you get a clean result, then you can push style.

Prompt 1 — UGC product pitch (clean + confident)

Prompt: A confident creator speaks directly to camera in a bright modern apartment. Medium shot. Subtle natural hand gestures near chest level and occasional head nods while talking. Soft daylight window lighting, realistic smartphone video style. Gentle slow push-in. Keep face sharp, keep hands stable, no sudden camera jumps.

Prompt 2 — Explainer vibe (calm + helpful)

Prompt: A friendly presenter explains a topic in a tidy home office. Medium shot to medium close-up. Natural breathing, subtle eyebrow movement, small hand gestures to emphasize points. Warm soft lighting, shallow depth of field. Camera mostly static with a slight handheld feel. Keep the subject centered.

Prompt 3 — Street interview energy (fast + engaging)

Prompt: A street interview at dusk with soft bokeh city lights behind the speaker. Medium close-up. Lively facial expressions and small gestures near chest level while talking. Slight handheld camera sway. Cinematic realism. Keep face stable and avoid sudden movement.

Prompt 4 — Podcast clip (studio + minimal motion)

Prompt: A podcast studio shot with a clean background. Medium close-up. Calm delivery with minimal gestures, slight head turns, micro-expressions synced to the voice. Soft studio key light with gentle shadows. Camera locked off. Keep mouth movement natural and consistent.

Prompt 5 — Brand spokesperson (polished ad)

Prompt: A polished brand spokesperson delivers a short ad line in a modern studio. Medium shot. Confident but natural facial acting, restrained gestures, clear posture. Clean commercial lighting, crisp focus. Slow push-in from medium shot to close-up. Keep any text or logos stable.

Prompt 6 — Dramatic cinematic monologue (film look)

Prompt: A cinematic interior at night with moody practical lights. Medium close-up. Subtle emotional delivery, micro-expressions, minimal but intentional gestures. Filmic contrast, realistic skin texture. Slow orbit left by 15 degrees. Keep background consistent and avoid sudden motion.

Prompt 7 — How-to tutorial (friendly creator)

Prompt: A creator shares a quick tip in a bright kitchen. Medium shot. Friendly smile, occasional hand gestures as if pointing to steps. Soft daylight. Camera stable with a very slight handheld feel. Realistic social video style, natural pacing.

Prompt 8 — Reaction clip (social + expressive)

Prompt: A creator reacts to surprising news on camera in a cozy bedroom setup. Medium close-up. Expressive eyebrows and eye movement, small shoulder motion, natural laughter timing. Soft warm lighting, realistic phone camera look. Keep face clean and stable.

If you want to run these immediately, paste any prompt into Hedra Character 3 on VideoWeb, upload your image and MP3 audio, and generate.

Tips that genuinely improve results (not vague “prompt better” advice)

1) Give your gestures boundaries

Hands go weird when prompts are too open-ended. Instead of “use gestures,” try:

- “small gestures near chest level”

- “hands mostly below shoulder height”

- “restrained gestures”

It’s boring, but it works.

2) Choose one camera move

The easiest way to break a generation is to ask for too much camera language.

Pick one:

- “slow push-in”

- “slight handheld sway”

- “gentle orbit 10–20 degrees”

Not all at once.

3) Start with a simple background

Busy scenes can be amazing, but they increase the chance of warping.

Start in:

- a bright room

- a clean office

- a simple studio

Then level up once the performance looks right.

4) Audio pacing is a cheat code

Clear, steady voice audio produces better face timing.

If your audio is rushed or noisy, the model has less “clean rhythm” to follow.

5) Iterate like a director

If the result is almost right, don’t rewrite everything.

Change one variable per iteration:

- camera: static → slow push-in

- lighting: studio → daylight

- gestures: lively → restrained

You’ll converge faster.

Why I recommend Hedra Character 3 on VideoWeb AI for actually trying this

You can read about Omnia all day, but the real question is: can you get results quickly without friction?

That’s where VideoWeb’s implementation is useful. The UI is simple, and the pipeline matches exactly what most creators need: upload image, add prompt, upload audio.

Here are varied anchor phrases you can naturally sprinkle across your post (and they all point to the same place):

- Try Hedra Character 3 on VideoWeb AI for character-driven clips

- Generate scenes using the VideoWeb Hedra Character 3 model

- Build UGC-style content with Hedra Character 3 video generation

- Create image-and-audio performance clips via Hedra Character 3 on VideoWeb

- Start fast with the Hedra Character 3 creator workflow on VideoWeb AI

Use them in different sections so your anchor text stays natural and not repetitive.

How to use Hedra Character 3 on VideoWeb AI (simple step-by-step)

If the interface in your screenshot is what you’re seeing, this walkthrough will feel familiar.

Step 1 — Upload your character image

- Use a clear face and clean lighting.

- Avoid extreme angles if you care about realism.

- If your character is stylized (anime/3D), keep that style consistent.

Step 2 — Paste a prompt (start with short + specific)

For your first run, choose something safe like:

Bright modern room, medium close-up, subtle gestures near chest level while speaking, soft daylight, realistic phone video style, gentle slow push-in, keep face sharp and stable.

Step 3 — Decide whether to keep “Translate” on

- If you’re writing prompts in another language and want them converted to a clean English prompt style, keep it on.

- If you’re already writing direct, precise English prompts, you can keep it off to preserve your exact wording.

Step 4 — Upload your MP3 audio

- Keep it clean.

- Keep it short.

- If the pacing is too fast, slow down slightly and regenerate.

Step 5 — Generate and iterate

If the output is close but not perfect, tweak one line:

- “camera locked off” → “gentle slow push-in”

- “lively gestures” → “restrained gestures”

- “street background” → “simple studio”

This is the fastest way to get a clip you’d actually post.

To start immediately, open try Hedra Character 3 on VideoWeb AI here.

Mini “Prompt Pack” for Hedra Character 3 (quick wins)

If you want a fast-start bundle, copy these short prompts into the VideoWeb Hedra Character 3 model page and swap the scene details.

- UGC Hook

Medium close-up, bright apartment, energetic delivery, subtle gestures, slight handheld sway, realistic social video.

- Product Demo

Clean studio, confident spokesperson, slow push-in, restrained gestures, commercial lighting, stable hands.

- Podcast Clip

Studio background, calm tone, minimal motion, clear micro-expressions, camera locked off.

- Cinematic Beat

Moody night interior, filmic contrast, minimal gestures, slow orbit 15 degrees, realistic texture.

- Street Interview

Dusk city bokeh, lively expression, slight handheld camera, subject centered, stable face.

## Quick FAQ

Do prompts need to be long?

No. Short, specific prompts usually beat long “novel prompts,” especially when you’re trying to get a stable first take.

Why do hands look strange sometimes?

Hands tend to break when gestures are too open-ended. Add clear limits, like:

- “small gestures near chest level”

- “hands mostly below shoulder height”

- “keep hands stable”

How do I make it look like a real creator video?

These phrases help a lot:

- “realistic phone camera style”

- “slight handheld sway”

- “natural room lighting”

How do I make a longer video?

Generate multiple short clips (better quality, easier control):

- Hook

- Main point

- CTA

Then stitch them together in your editor.

Final takeaway

Hedra Omnia AI is a strong sign of where character video is heading: performance-first clips that feel more like directed content and less like “AI mouth movement.”

If you want the simplest way to test this workflow right now, the best next step is to pick one prompt, grab one short MP3 voiceover, and run 2–3 iterations on access the Hedra Character 3 model on VideoWeb AI.

If you tell me your niche (SaaS, gaming, beauty, finance, anime storytelling, etc.), I can generate a niche-specific prompt pack (hooks + CTAs) tailored to your audience tone.